Home

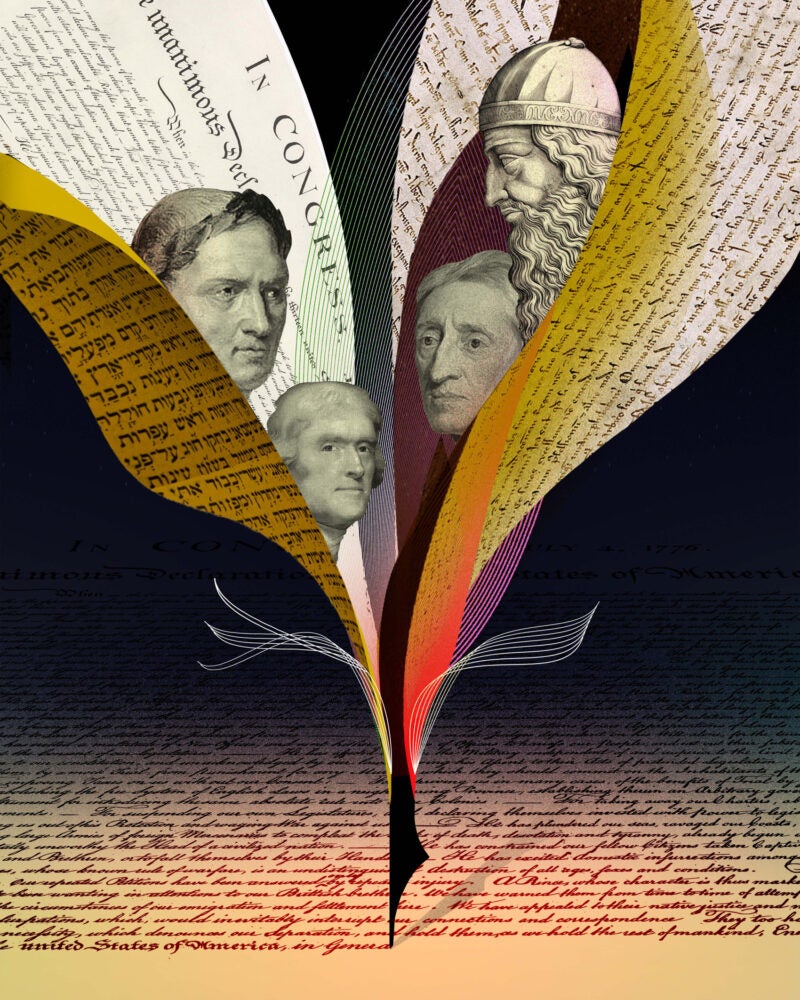

The Rule of Law

Born in ancient thought and forged through revolution, the idea that no person is above the law has been tested anew in every era. Harvard Law faculty examine this foundational legal principle

Featured Areas of Interest

- Administrative and Regulatory Law

- American Indian Law

- Animal Law

- Antitrust

- Arts, Entertainment, and Sports Law

- Bankruptcy and Commercial Law

- Children and Family Law

- Civil Litigation

- Civil Rights

- Conflict of Laws

- Constitutional Law

- Criminal Law and Procedure

- Contracts

- Comparative Law

- Corporate and Transactional Law

- Courts, Jurisdiction, and Procedure

- Disability Law

- Education Law

- Election Law and Democracy

- Employment and Labor Law

- Environmental Law and Policy

- Finance, Accounting, and Strategy

- Financial and Monetary Institutions

- Gender and the Law

- Health, Food, and Drug Law

- Human Rights

- Immigration Law

- Intellectual Property

- International Law

- Jurisprudence and Legal Theory

- Law and Economics

- Law and Philosophy

- Law and Political Economy

- Law and Religion

- Leadership

- Legal History

- Legal Profession and Ethics

- LGBTQ+

- National Security Law

- Negotiation and Alternative Dispute Resolution

- Poverty Law and Economic Justice

- Private Law

- Property

- Torts

- Race and the Law

- State and Local Government

- Tax Law and Policy

- Technology Law and Policy

- Trusts, Estates, and Fiduciary Law

- More

500+ Courses & Seminars

47 Clinics & Student Practice Orgs

88 Student Organizations

Limitless Possibilities