It’s been more than a decade since the United States first publicly blamed another country for a cyberattack, an accusation detailed in a 31-count indictment against five members of the Chinese military who hacked into the systems of American nuclear, metal, and solar companies over a period of eight years.

That public attribution of economic espionage in 2014 marked a major shift for the United States, which had previously worked behind the scenes to address digital intrusions that came from beyond its borders, if it did anything at all. Part of the rationale for naming and shaming the perpetrators was to deter bad actors from carrying out future hacks.

Deterrence as a defense strategy, however, has so far proved to be something of a dud. Today, cyberattacks seem almost commonplace; breaches of public and private entities are everyday news. So-called ransomware attacks, in which hackers lock up an entity’s system or files and demand payment to restore functionality, have proliferated. In 2024, in a federal indictment, a North Korean intelligence operative was accused of using the proceeds from ransomware attacks he’d carried out against hospitals to fund additional cyberattacks on government entities around the world, among them two U.S. Air Force bases. Other attackers, including groups sponsored by countries such as China and Russia, do not announce their presence; their goal is to stay hidden long enough to steal information that can later be used for financial or geopolitical gain. By hacking into U.S. telecommunications companies in 2024, China apparently intercepted surveillance data that was meant for law enforcement agencies.

Cyberattacks may be difficult to prevent, but that doesn’t mean policymakers, governments, and the sector have given up trying. Harvard Law School faculty and alumni who have worked on cybersecurity issues in their research and practice, some at the highest levels of the U.S. government, say protecting people and information from cyber intrusions requires a variety of approaches. Among them: the use of criminal and international law to hold bad actors accountable; a regulatory regime that requires companies to take steps to safeguard consumer information; and close cooperation between the private sector and the government to ensure an efficient and effective response.

“We don’t say the fact that homicides are still committed means we should drop all our attempts to prevent them from occurring,” says John P. Carlin ’99, a partner at Paul, Weiss, where he is chair of the cybersecurity and data protection practice group. “What we need to do in the cyber context — and it’s evolving and improving over time — is increase the costs [for the attackers] so that we can ultimately reduce the number of people being hit by these attacks.”

Setting and Enforcing Legal Boundaries

In the case of an attack sponsored or carried out by another country, even when an indictment doesn’t lead to an extradition or an actual prosecution, it can still serve an important purpose, according to Harvard Law Professor Kristen Eichensehr.

Eichensehr has written extensively about attribution of cyberattacks in the context of international law, including in a 2020 piece for the UCLA Law Review called “The Law and Politics of Cyberattack Attribution.”

Attribution — in a criminal indictment, sanctions, or other official statements — she argues, is an important part of building international norms and what’s known as customary international law, or law that is based on established practices rather than formal treaties or conventions.

“By outing government hackers in particular and condemning what they are doing, you begin to draw lines and bring clarity about what is permissible and what is impermissible,” Eichensehr says.

Carlin agrees. Before he joined Paul, Weiss, he worked on cybersecurity matters in government, including as principal associate deputy attorney general in the Biden administration, assistant U.S. attorney general under President Barack Obama ’91, and special counsel to the FBI director under President George W. Bush. He has written two books on the topic and has worked on some of the most high-profile cyberattacks in history, including North Korea’s attack against Sony Pictures over its movie “The Interview,” the ransomware attack on Colonial Pipeline in 2021, and that first indictment against the Chinese military personnel in 2014.

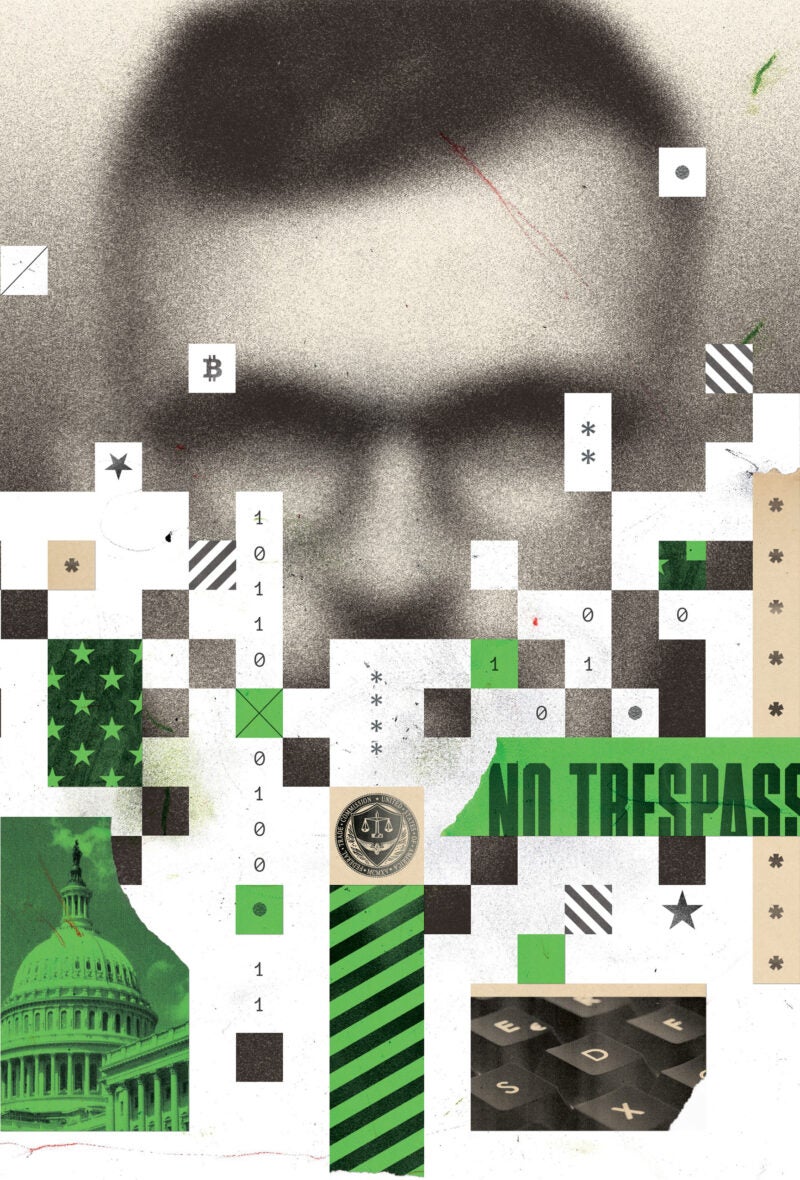

“If you allow someone to walk across your lawn continually, they earn the legal right to cross your lawn,” he says. “In this case, we were allowing the Chinese to hack so noisily, they didn’t care about being caught. We were creating an easement, creating a norm, which then has the force of international law.”

That first indictment and others that have followed since, Carlin explains, were a kind of No Trespassing sign to China and the rest of the world.

Even if such signaling can’t prevent an intrusion, it can often prevent something much worse: a decision by a state or nonstate actor to use what they’ve learned through a hack to inflict significant harm.

Kristen Eichensehr

“ This is a long-term cat-and-mouse game.”

“Other states have not used the access they’ve had in the most destructive ways,” Eichensehr says. “That’s partly because the United States has some effective detection and defensive strategies, but it’s also partly [due to] a lack of desire by other states to use their access in destructive ways. Public statements by the U.S. government about the kinds of behavior it considers out of bounds — and the consequences the United States would impose if states engaged in that behavior — may tamp down the likelihood of destructive actions.”

Regulating Cyberdefenses

The U.S. government also seeks to hold private companies accountable when their systems are breached.

Questions about whether and how to do so can be complicated, says Timothy Edgar ’97, a lecturer at Harvard Law School who advised the National Security Council on privacy and cybersecurity issues from 2009 to 2010 and later helped Brown University launch its cybersecurity degree program.

“There’s a general philosophy that you don’t want to victimize a company twice,” he says.

That said, some level of regulation is required.

“You can’t regulate the hackers,” Edgar says, “but you can require that companies assess their risk and implement a cybersecurity program that addresses and mitigates those risks.”

At the federal level, the Federal Trade Commission has been an active player in the cybersecurity space, including through a 1914 law that prohibits “unfair or deceptive acts or practices.” According to a report from the Atlantic Council in 2024, the agency has pursued 47 cases in the cybersecurity context using that particular law in the past two decades or so.

Newer regulations and agencies also have put companies on notice. Congress created the Cybersecurity and Infrastructure Security Agency, which aims to coordinate responses to cyberthreats (although rules that would require companies to report cyber incidents and ransomware payments to that agency have not been finalized). In 2023, the U.S. Securities and Exchange Commission adopted rules that require public companies to disclose cybersecurity incidents within four days of determining that such an incident is “material.” And the U.S. Department of Justice now mandates that companies safeguard government-related and “bulk” sensitive personal data on Americans.

Edgar says that voluntary efforts by corporations and others to improve their cybersecurity are far more important than government regulation. The federal government provides tools, among them the Cybersecurity Framework of the National Institute of Standards and Technology, to help companies to strenghten their own defenses.

In the United States, Edgar explains, there has always been “a balance between strong rules and protections and not wanting to infringe on privacy and civil liberties or on innovation and competition.”

“Our whole approach has been less of a top-down approach and more cooperatively working with the private sector,” he says.

Incentivizing Cooperation

Tracy Wilkison ’96, a longtime federal prosecutor who served as the United States attorney for the Central District of California between 2021 and 2022 and now advises clients on cybersecurity matters as a senior managing director at FTI Consulting, says a big part of her outreach to companies as a prosecutor was trying to convince them to work with the federal government when they did experience a breach.

“We wanted them to know, ‘We’re your friend; we think of you as the victim,’” she explains. “‘We’re trying to get the real bad guys.’”

Not only does cooperation make identification and prosecution of perpetrators more likely, it can also reveal the full scope of a threat that may at first seem isolated or random. Carlin recalls the case of Ardit Ferizi, who hacked into a U.S. company and stole personally identifiable information, including about members of the U.S. government and military. He demanded a single bitcoin as ransom, worth about $500 at the time. After the company reported the crime, prosecutors learned that Ferizi had sold the information to a terrorist who called on his followers to target the individuals. (The terrorist was later reportedly killed in a U.S. drone strike; Ferizi was extradited from Malaysia and sentenced to 20 years in prison.)

“That shows how difficult it can be for a victim company to assess what the threat is or what actually occurred unless they talk to the government,” Carlin says.

Figuring out how to share information between the government and private sector at scale and speed is still something the U.S. is wrestling with, he adds, although there have been improvements.

“It doesn’t need to be all stick,” he says.

The SEC’s 2023 rules requiring disclosure of a cyberattack, for instance, make an exception for cases in which disclosing the hack would threaten national security or public safety. Because the U.S. attorney general must make such a determination, taking advantage of that carveout requires cooperation with the government.

Planning for the Worst

Wilkison says she can remember the first cyberattack she dealt with as a prosecutor — a business email compromise in which the perpetrator was able to divert funds from one bank account to another.

“It was this whole new approach to the old crime of stealing money,” she says.

Back then, the threat was novel and surprising. At this point, it’s a known risk in an increasingly digital world.

Now that she’s in the private sector, part of Wilkison’s job is to help companies think through how they would respond to a cyberattack, including through “tabletops” during which high-level personnel respond to a simulated attack.

“You have to have a really good cybersecurity program and play it out: Who’s in charge? How are we going to communicate this to our employees? Are we going to pay a ransom?” she says. “These are not decisions you can make in the moment.”

Jonathan L. Zittrain ’95, Harvard Law School’s George Bemis Professor of International Law and faculty director of the Berkman Klein Center for Internet & Society, says security was sacrificed early in favor of flexibility and innovation in the design and architecture of personal computing devices and the internet.

“It’s a genuine trade-off,” he says. “To be able to have reprogrammable PCs connecting to an internet that can take you anywhere and connect you to anybody before you’ve even established who they are — that is both extremely cool, and also don’t be surprised that you have a fundamental security problem. Any kind of answer is a mitigation, a kind of nibbling around the edges” unless people are walled off from the internet.

Zittrain says he argues instead for “resiliency.”

“Instead of trying to achieve maximum security through lockdown, you should figure out how not to have your entire life’s work trivially available if your password is compromised,” he says.

Carlin, who has advised OpenAI on safety and security issues, has said he’s optimistic that society can “innovate our way out” of cybersecurity risks, in part by designing protections on the front end of new technologies, rather than trying to patch up holes on the back end. Artificial intelligence may help — by making it easier for companies to detect anomalies in their systems — but it also may make it easier for bad actors to sift through compromised data for the most valuable information.

“This is a long-term cat-and-mouse game,” Eichensehr agrees, “where the good guys are trying to constantly improve security and adversaries are constantly trying to breach security. We’ve been fairly lucky so far, but it’s hard to tell how long that luck will last.”