Early in 2020, a cell phone captured the fatal attack of a jogger named Ahmaud Arbery by three white men in Georgia. That video was critical evidence in the criminal cases against the assailants, who were convicted of murder, and helped fuel a nationwide wave of Black Lives Matter protests that year.

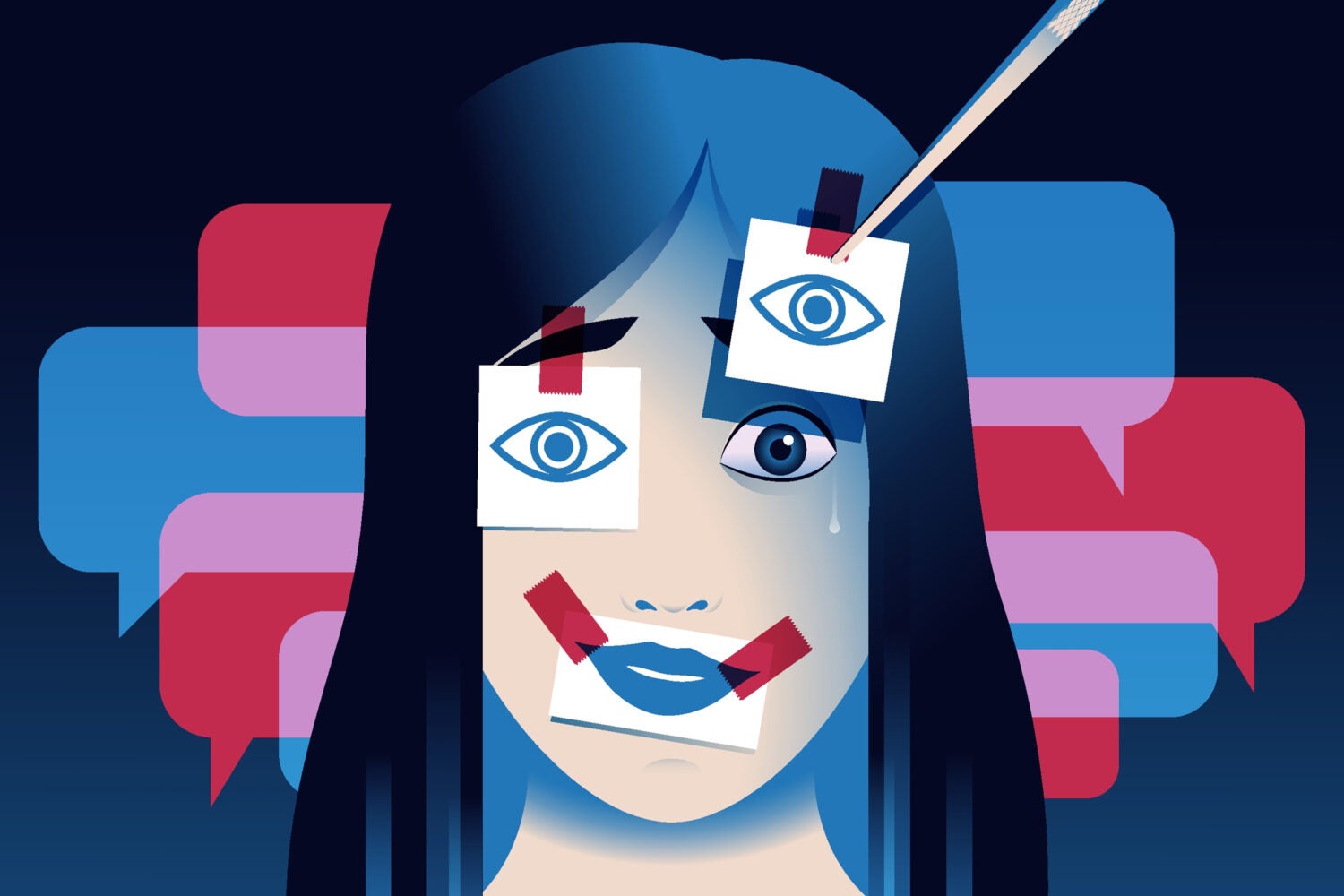

Just six years later, the landscape for recorded media looks slightly different, as artificial intelligence tools have become ubiquitous, and AI-generated text, audio, and video have flooded the internet. Can we still believe the things we see, hear, and read? And how could our newfound doubt affect our ability to seek justice under the law?

Duncan Levin is a white-collar defense attorney and former federal prosecutor with the U.S. Department of Justice, and lecturer on law who in the winter term taught Cash, Crime, and the Constitution: The Legal Frontiers of Asset Forfeiture and Money Laundering at Harvard Law School. He argues that as AI-generated media blurs reality, the technology has the potential to “destabilize” some aspects of the criminal system.

“I think AI may change not only what people think about evidence, but what they think evidence is,” says Levin.

Levin argues that as people become more skeptical of long-accepted types of evidence, prosecutors, defense attorneys, and judges will have to radically rethink their approach. But while he believes that this adjustment might be painful in the short-term, over the long haul, it could “force the system to become more sophisticated.”

In an interview with Harvard Law Today, Levin shared his views on how artificial intelligence could impact the criminal trial — and why, all told, AI could serve as a “healthy pressure, even if it is an uncomfortable one.”

HLT: In your experience as a prosecutor and defense attorney, what unique problems might AI pose in criminal cases as opposed to civil litigation?

Levin: Most of the conversation about AI in law has centered on civil practice, and understandably so. Civil litigation is where questions of efficiency, cost, scale, and automation are most visible. And of course, the courts have been dealing with the problem of lawyers citing to “hallucinated” cases. But criminal law presents a different problem altogether because criminal trials are not just about resolving disputes efficiently. They are about the state’s attempt to take liberty from an individual, and they are structured around a constitutional burden of proof designed to minimize the risk of factual error.

That is why AI poses a more profound challenge in criminal cases than in civil ones. In a civil case, if the authenticity of a document or recording is disputed, that may affect weight, credibility, or liability. In a criminal case, the same uncertainty can operate at a deeper level, because the government is required to prove guilt beyond a reasonable doubt. The possibility that an exhibit may be synthetic, manipulated, or otherwise unreliable is not just another evidentiary skirmish. It can go directly to whether the prosecution has met the burden that due process demands.

There is also a structural difference in the function of evidence. Criminal cases often depend on a relatively narrow set of key exhibits — a recording, a video, a sequence of text messages, a social media post, a geolocation trail. Those exhibits are often presented to juries not as peripheral details but as anchors of narrative truth. AI threatens to destabilize that. It introduces the possibility that what appears to be the most concrete evidence in the case may in fact be the least secure.

And that challenge cuts in both directions. As a former prosecutor, I can see how AI may make it harder for the government to secure convictions in cases that rely heavily on digital proof. As a defense lawyer, I can also see the danger that once skepticism becomes generalized, defendants may have a harder time persuading juries with authentic exculpatory evidence. So, the issue is not simply that AI helps one side or the other. It puts pressure on the truth-finding function of the criminal trial itself.

HLT: What kinds of evidence currently admissible in criminal trials might be at risk of being created or manipulated by AI?

Levin: The obvious answer is audio and video, because those are the forms of evidence people most readily associate with deepfakes. If a jury hears what sounds like the defendant’s voice, or sees what appears to be a defendant on video, that evidence can be enormously powerful. But the real problem is broader than the familiar deepfake example.

Modern criminal prosecutions rely heavily on digital artifacts of all kinds: photographs, surveillance footage, text messages, emails, direct messages, social media posts, screenshots, online account records, and digitally generated timelines of location or activity. Each of those forms of evidence carries with it an implied claim of authenticity. It says, in effect, this happened, this was said, this account was used, this image reflects reality. AI makes those implied claims easier to counterfeit and harder for laypeople to assess.

What concerns me especially is that the danger is not limited to wholesale fabrication. AI may also be used to alter, enhance, splice, recontextualize, or simulate evidence in subtler ways. A manipulated image need not be entirely fabricated to mislead. A voice recording need not be entirely synthetic to create a false impression. A sequence of messages can be selectively generated or altered in ways that preserve surface plausibility while changing substantive meaning. In other words, the challenge is not just false evidence; it is persuasive false evidence.

There is also what I think of as the atmospheric effect of AI. Even where the evidence is genuine, jurors may know enough about digital manipulation to wonder whether it is not. So, AI changes not only the risk profile of false exhibits entering the courtroom. It changes the epistemic status of real exhibits as well. Evidence that once seemed solid may now seem contingent. That is a profound development in a system that has long relied on jurors’ common-sense confidence in what they can see and hear.

“The danger is not limited to wholesale fabrication. AI may also be used to alter, enhance, splice, recontextualize, or simulate evidence in subtler ways. … the challenge is not just false evidence; it is persuasive false evidence.”

HLT: What are the traditional methods of authentication and reliability for evidence in a criminal case, and how does AI challenge those longstanding doctrines?

Levin: Traditionally, authentication has been understood as a threshold requirement, not an ultimate one. Under the rules of evidence, the proponent need only offer sufficient evidence for a reasonable juror to conclude that an exhibit is what it is claimed to be. That burden has never been especially onerous. A witness with knowledge may identify a photograph. Someone familiar with a speaker’s voice may identify an audio recording. A chain of custody may support the admission of physical or digital evidence. Distinctive characteristics, surrounding circumstances, and metadata may all contribute to the foundation.

That framework reflects an important doctrinal assumption: Authentication is a gatekeeping inquiry, and the adversarial process can do the rest. Courts have long assumed that the principal risks involve ordinary tampering, misidentification, or incomplete handling. Those are serious issues, of course, but they are still issues within a world where the underlying exhibit is presumptively tethered to some real event or communication.

AI complicates that premise because it attacks the distinction between authenticity and plausibility. A piece of evidence may now look authentic, sound authentic, and fit coherently into surrounding facts, while still being entirely or materially synthetic. That matters because many of the doctrines we use to authenticate evidence are not really designed to test whether the artifact itself was born of reality. They are designed to test whether there is enough circumstantial basis for a jury to consider it.

HLT: Could you give us an example?

Levin: Take voice identification. The classic question is: Do you recognize the speaker’s voice? A witness may answer that question honestly and persuasively. But that only establishes familiarity with the voiceprint; it does not resolve whether the recording is a genuine capture of an actual conversation rather than an artificial simulation. The doctrine assumes that voice recognition is doing more work than it may in fact be capable of doing in an age of generative audio.

The same is true more broadly. Distinctive characteristics may no longer be very distinctive. Metadata may be vulnerable to manipulation or may require expert interpretation. Chain of custody remains important, but chain of custody alone may not answer whether a digital artifact was altered before it entered the chain. So, the legal system may increasingly find that doctrines built to address tampering are inadequate for a world of synthetic creation.

That is why I think this is a doctrinal problem, not just a technological one. AI exposes that the law of authentication rests on assumptions about the nature of evidence that may no longer hold.

“Many of the doctrines we use to authenticate evidence are not really designed to test whether the artifact itself was born of reality.”

HLT: Most of us have heard of the requirement that a jury find a defendant guilty “beyond a reasonable doubt.” What does that mean? Could the possibility of fabricated digital evidence affect the meaning of proof beyond a reasonable doubt?

Levin: “Beyond a reasonable doubt” is the law’s way of expressing a moral and constitutional judgment that when the state seeks to deprive someone of liberty, ordinary confidence is not enough. The standard does not require certainty in an absolute sense, and it does not mean beyond all possible doubt. But it does reflect a deliberate choice to tolerate some risk that guilty people will go free in order to reduce the risk that innocent people will be wrongly convicted.

That principle assumes, however, that jurors are evaluating evidence in a world where the central questions are credibility, consistency, perception, memory, and corroboration. In other words, the standard developed in a setting where doubt typically attaches to witnesses or interpretations of facts. AI introduces a different layer of doubt. It raises the possibility that the exhibit itself — the recording, the image, the post, the message — may not be what anyone says it is.

That distinction matters. A juror may conclude that a witness is sincere and still have reasonable doubt because the underlying exhibit seems potentially synthetic. So, AI may affect the operation of the reasonable-doubt standard not by changing its formal definition, but by changing the kinds of uncertainty that jurors bring to their deliberations. The question becomes not just “Do I believe this witness?” but “Do I believe the ontology of this evidence at all?” That is a more foundational inquiry.

In that sense, AI may sharpen the reasonable-doubt standard by making visible something that was once implicit: proof is not simply about persuasion, but about epistemic confidence in the reality of the proof itself. If jurors begin to feel that digital evidence carries an irreducible possibility of artificiality, that may alter the practical meaning of the government’s burden even without any doctrinal change in the jury instructions.

The larger point is that reasonable doubt is not just a verbal formula. It is a mechanism for allocating the risk of uncertainty in criminal adjudication. AI may dramatically increase one category of uncertainty — uncertainty about authenticity — and if that happens, the burden of proof will feel heavier in practice, because jurors will be less willing to treat digital exhibits as fixed points of reference. That is why AI has the potential to reshape not merely evidence law, but the lived meaning of the constitutional standard itself.

HLT: What new burdens, if any, should be placed on parties to verify that evidence is not AI-manipulated? Who should bear that burden?

Levin: In criminal cases, the answer has to begin with first principles. The government bears the burden of proof. It is the government that seeks conviction. So, if digital evidence is central to the prosecution’s case, the prosecution should bear the primary burden of establishing authenticity with greater rigor than courts have often demanded in the past.

I do not mean that every case should require a parade of experts or a mini-trial on digital forensics. The law has to remain workable. But I do think courts will need to move away from the instinct that a thin foundation is always enough, and that any deeper concerns go only to weight. In some cases, especially those turning on audio, video, or disputed digital communications, authenticity may require more than witness familiarity or a simple chain of custody. It may require forensic examination, clearer provenance, stronger metadata analysis, or expert testimony sufficient to assure the court that the exhibit has not been materially altered or synthetically generated.

“AI introduces a different layer of doubt. It raises the possibility that the exhibit itself — the recording, the image, the post, the message — may not be what anyone says it is.”

At the same time, I would not frame this only as a burden on prosecutors. If a defendant seeks to introduce affirmative digital evidence, courts may also require a stronger showing of authenticity. But the asymmetry still matters. A defendant does not carry the burden of proving innocence. So, while both sides may need to satisfy more robust evidentiary standards, the constitutional burden should remain where it belongs: on the state when it seeks to imprison.

Over time, I suspect the law will move toward a combination of doctrinal and technological solutions. On the doctrinal side, courts may become more exacting under existing authentication rules. On the technological side, provenance tools, cryptographic signatures, or secure capture systems may emerge as more reliable ways of showing authenticity at the point of creation. But until those systems are more widespread, judges will have to decide whether traditional foundations remain adequate in a world where fabrication is easier, cheaper, and harder to detect.

So, the real burden is not just evidentiary. It is institutional. Courts will need to decide how much uncertainty the criminal process can tolerate before the risk of error becomes unacceptable.

HLT: In your view, how might AI influence the way people think about evidence and the criminal justice system in general?

Levin: I think AI may change not only what people think about evidence, but what they think evidence is. For a long time, visual and digital evidence carried an aura of objectivity. People might dispute what a witness remembered or why a witness was lying, but a video, a recording, or a contemporaneous text exchange often felt like a harder kind of proof. That intuition was always somewhat overstated, but it was real and legally important. AI weakens it.

Once the public internalizes that highly realistic digital evidence can be fabricated, the entire evidentiary environment changes. People may become more skeptical, more cautious, and in some settings more cynical. That skepticism has some virtue. Criminal law should not be built on naïve faith in official narratives or in the infallibility of seemingly objective evidence. But skepticism can also become corrosive if it dissolves confidence indiscriminately.

That is the larger institutional concern. The justice system depends on public confidence not only that trials are fair, but that they are capable of reaching truth through lawful procedures. If jurors begin from the premise that any recording may be fake, any image may be manufactured, and any digital communication may be spoofed, then the criminal trial risks becoming less an exercise in fact-finding than an exercise in generalized epistemic anxiety. That is not healthy for either side.

At the same time, this moment may force the system to become more sophisticated. Judges, lawyers, jurors, and investigators may all need to become more literate about the creation, preservation, and forensic evaluation of digital evidence. Courts may have to speak more candidly about what they do and do not know. In that sense, AI may force a deeper legal honesty. It may make explicit that evidentiary trust has always rested on a combination of doctrine, technology, and social confidence.

So, I think the long-term influence of AI will be double-edged. It may deepen mistrust in the short term, but it may also push the criminal legal system toward a more rigorous and transparent account of what it means to prove something. And in a system built around the idea that liberty should not be taken without very high confidence, that is ultimately a healthy pressure, even if it is an uncomfortable one.

Want to stay up to date with Harvard Law Today? Sign up for our weekly newsletter.